3D representations

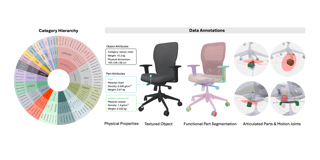

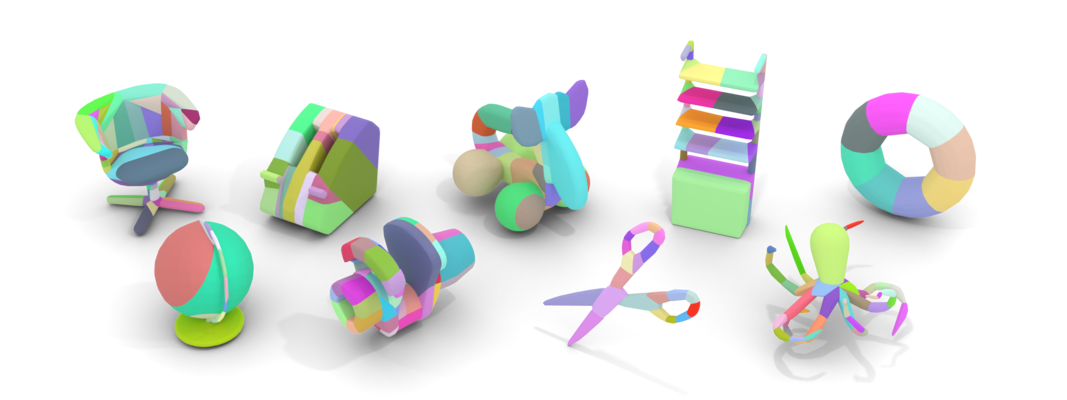

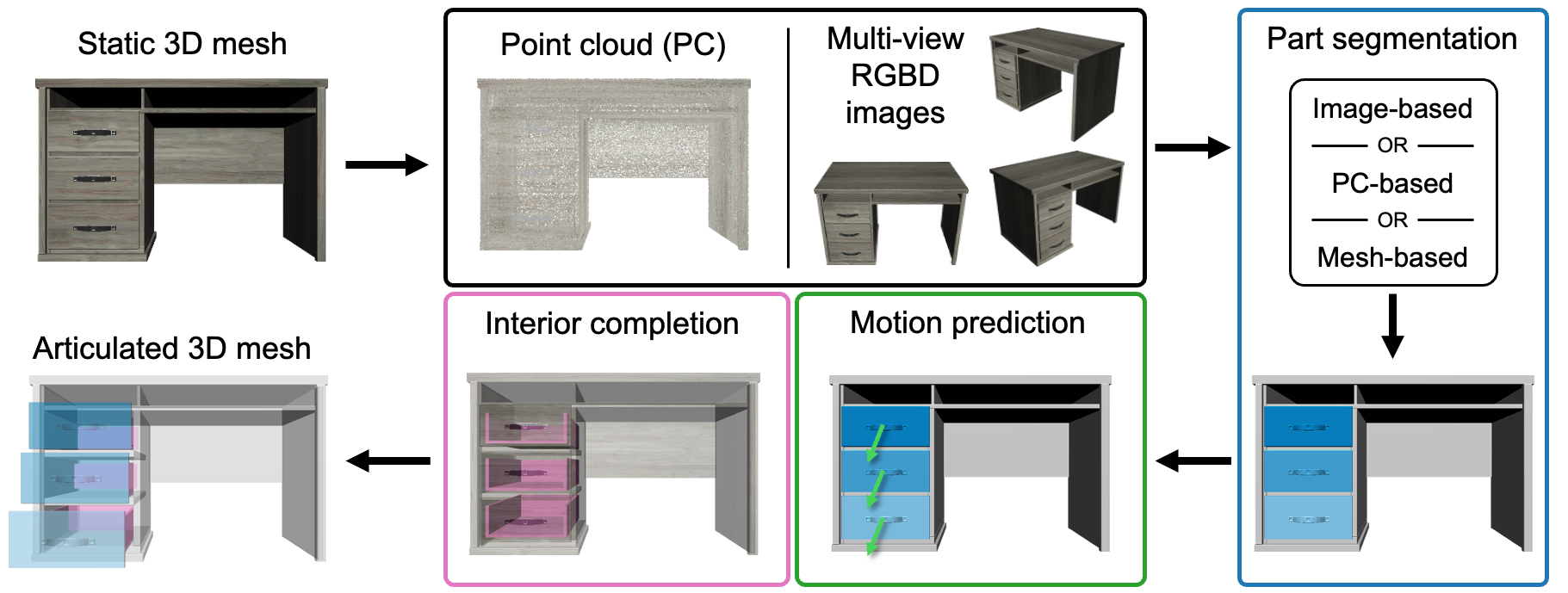

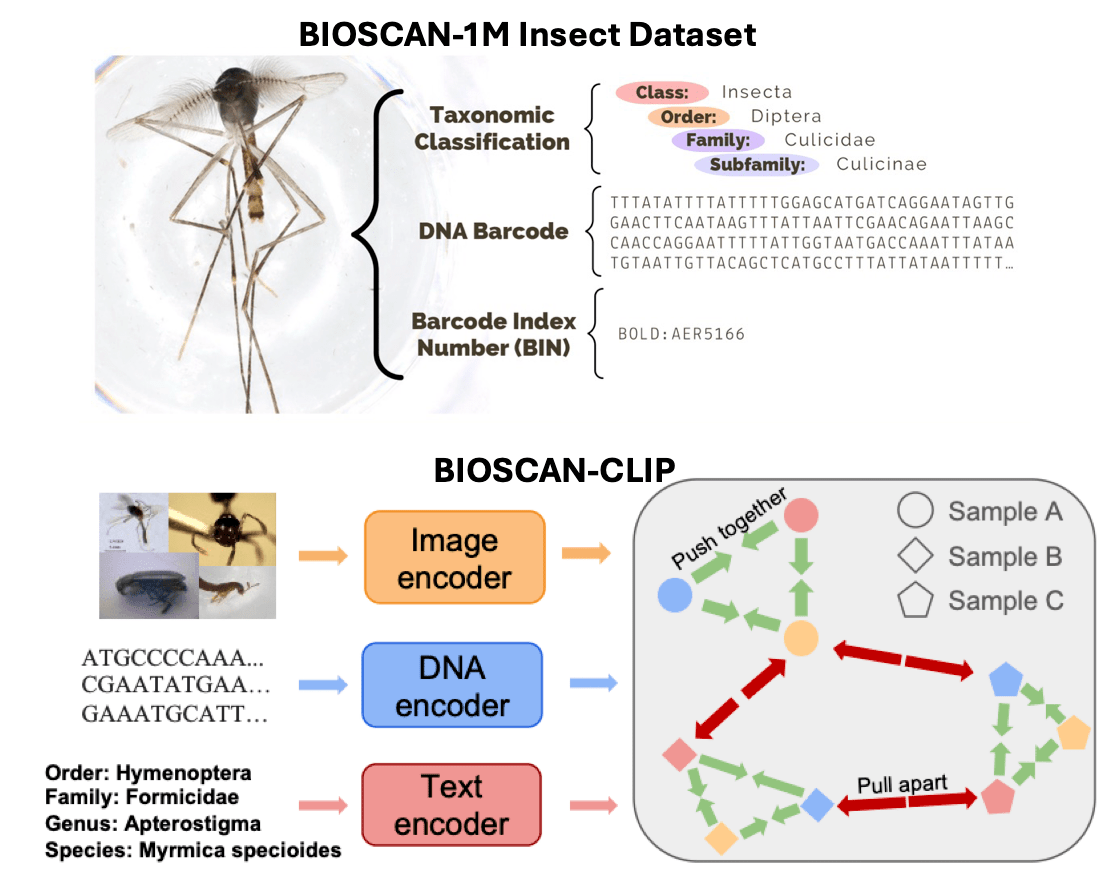

We build datasets, models, and benchmarks for understanding shapes, scenes, articulated objects, and human-object interactions.

We study how machines perceive, describe, generate, and interact with 3D worlds through language, geometry, embodied AI, and generative models.

The 3DLG group (3D, Language, Generation) focuses on research involving 3D representations, natural language, and 3D content generation.

We build datasets, models, and benchmarks for understanding shapes, scenes, articulated objects, and human-object interactions.

We connect natural language to 3D perception through visual grounding, dense captioning, question answering, and multimodal embeddings.

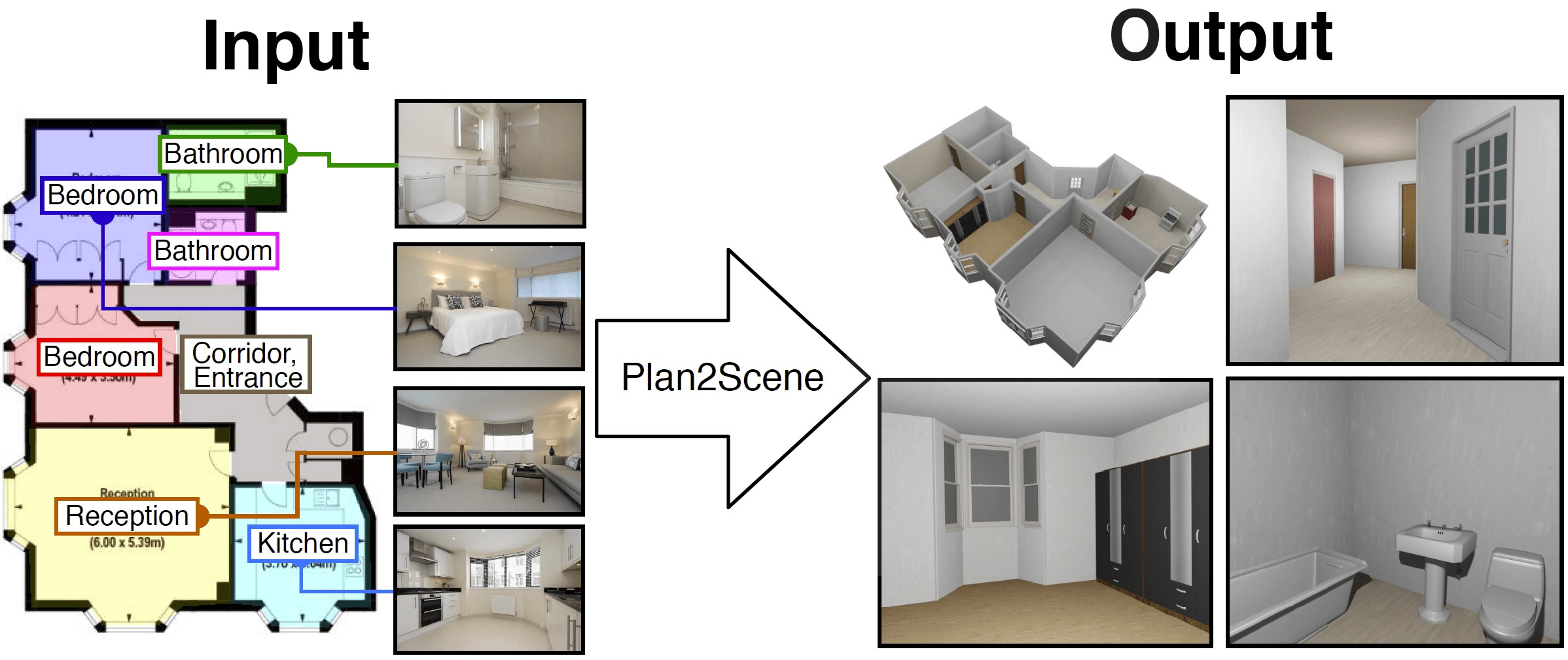

We study scene synthesis, text-to-3D generation, digital twins, and controllable models for interactive environments.

Selected recent papers from the group.

A snapshot of active directions across the lab.

Talks, publications, conference activity, and lab updates.

Talks June 3rd (am) - Angel will be giving a talk at the Workshop on Multimodal Spatial Intelligence June 3rd (pm) - Angel will be giving a talk at the Workshop on U...

3DV 2026 in Vancouver!

Papers