Rebuilding Visual Spatial Intelligence Evaluation for Accurate Assessment of VLM 3D Reasoning

VSI-Bench derives ground-truth answers for question-answer pairs from low-quality 3D reconstructions and noisy annotations in scene datasets (e.g., ScanNet v2, ScanNet++ v2, ARKitScenes), leading to a substantial portion of incorrect ground-truth answers across tasks.

VSI-Bench Ground-Truth Quality

Diagnostics on object counting and object size tasks

GT Correctness · Object Counting

Object counting shows notable incorrectness and ambiguity (e.g., ill-defined object criteria like shoes).

“Door” Height Distribution (cm)

Door height distributions as proxies show systematic obj. size errors. Red bins denote physically implausible sizes.

Statistics are derived from human annotations.

VSI-Bench QA Pairs

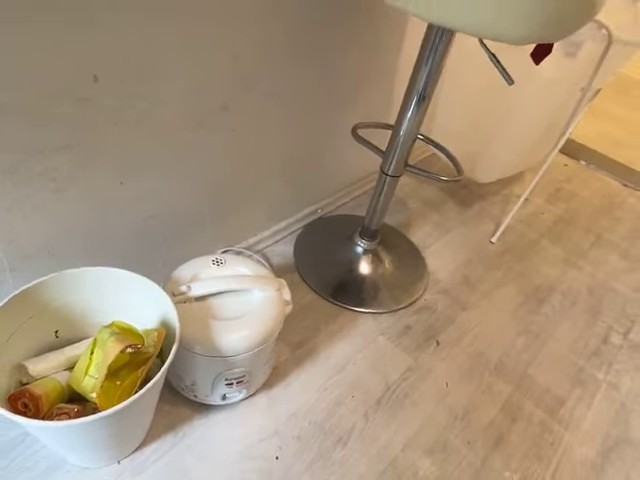

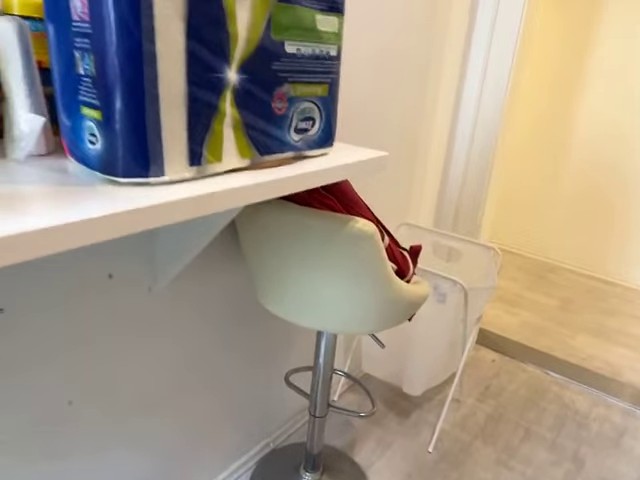

How many chair(s) are in this room?

How many table(s) are in this room?

What is the length of the longest dimension (length, width, or height) of the door, measured in centimeters?

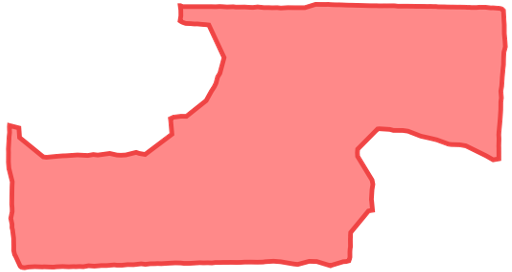

What is the size of this room (in square meters)? If multiple rooms are shown, estimate the size of the combined space.

VSI-Bench QA Pairs

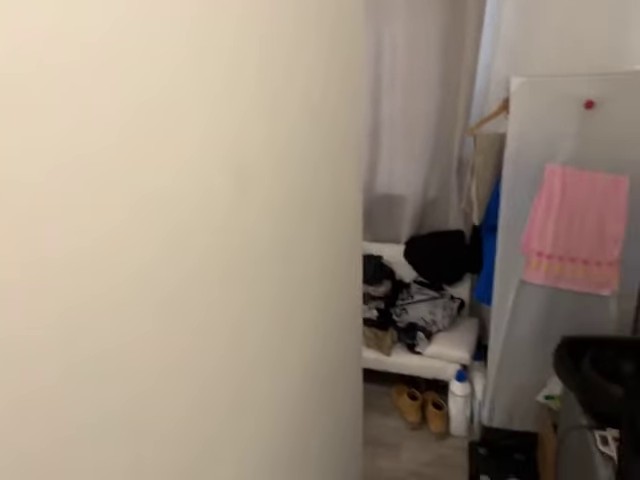

How many chair(s) are in this room?

What is the length of the longest dimension (length, width, or height) of the door, measured in centimeters?

What is the size of this room (in square meters)? If multiple rooms are shown, estimate the size of the combined space.

Prior evaluations assume full-scene access, whereas most vision-language models operate on sparsely sampled frames (e.g., 16–64). This mismatch leads to missing objects and geometries, rendering a large portion of questions unanswerable or incorrect.

Questions are unanswerable when queried objects are absent, and incorrect when frame-based answers deviate from full-scene ground truth.

VSI-Bench Question Answerability & Correctness

by Frame Budget

Statistics are derived from human annotations. Video frames are uniformly sampled using np.linspace.

VSI-Bench QA Pairs

Measuring from the closest point of each object, what is the distance between the telephone and the tv (in meters)?

Telephone is invisible under 16-Frame sampling

How many suitcase(s) are in this room?

Visible suitcase count varies with frame sampling rate

What will be the first-time appearance order of the following categories in the video: sofa, door, suitcase, kettle?

Suitcase and sofa appear in the same frame

• Rethink • Rebuild • Reevaluate

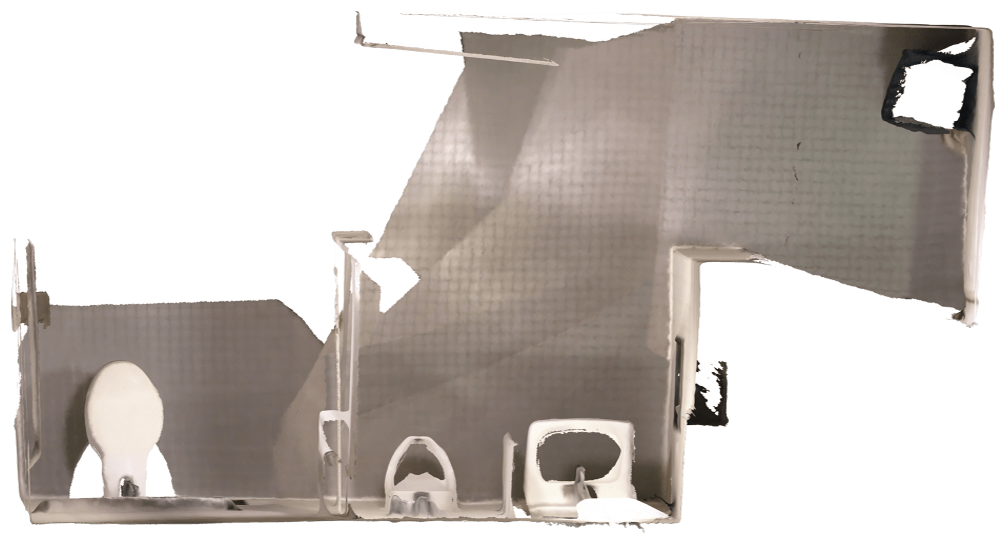

Every ReVSI scene is fully annotated by 3D experts — without any heuristic or model-predicted shortcuts — and passes multiple rounds of video-aware verification, ensuring accurate object names, instance identity, physical size, and faithful room-area boundaries.

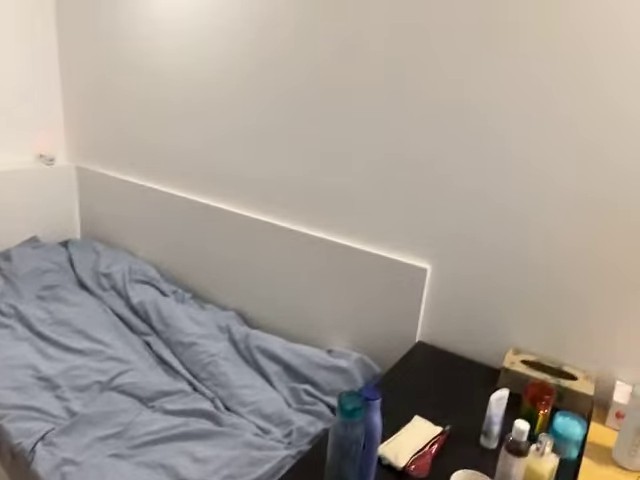

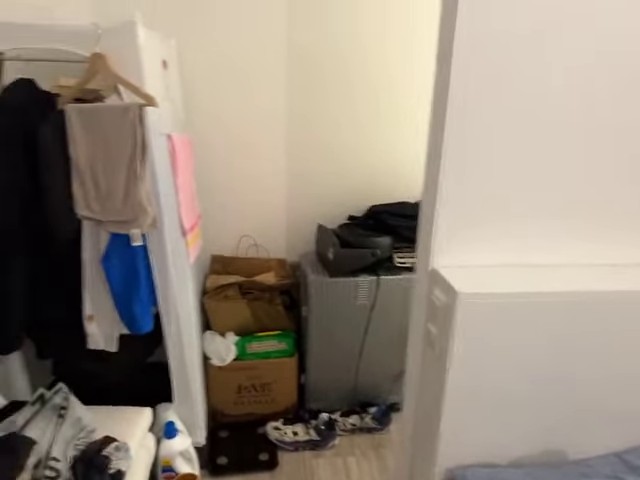

scene0441_00 · ScanNet v2

scene0441_00 · ScanNet v2

ReVSI covers more scenes, more object instances, and a substantially larger object vocabulary than VSI-Bench, with open-vocabulary support that frees evaluation from a fixed label set.

Answers are spread evenly across the value range for both numerical and multiple-choice questions, preventing models from scoring high by exploiting skewed answer frequencies.

Ground-truth answers adapt to different input frame budget, reflecting what the model actually sees.

Question: How many chair(s) are in this room?

Answer:

Evaluations on ReVSI with corresponding VSI-Bench scores shown in parentheses. For ReVSI, each model is evaluated against frame-sampling-specific ground-truth answers corresponding to its inference frames (shown in Frames column), whereas VSI-Bench uses a single set of ground-truth answers shared across all frame settings. ReVSI scores that exceed their VSI-Bench counterparts are highlighted in green.

| Method | Frames | Numerical Question | Multiple-Choice Question | Avg. | |||||

|---|---|---|---|---|---|---|---|---|---|

| Obj. Cnt. | Abs. Dist. | Obj. Size | Room Size | Rel. Dist. | Rel. Dir. | Route Plan | |||

| Baseline | |||||||||

| Chance (Random) | ALL | - | - | - | - | 23.7 25.0 | 26.8 36.1 | 26.0 28.3 | - |

| Chance (Frequency) | ALL | 52.2 62.1 | 40.1 32.0 | 17.4 29.9 | 20.9 33.1 | 25.8 25.1 | 31.9 47.9 | 30.2 28.4 | 31.4 34.0 |

| Proprietary Models (API) | |||||||||

| GPT-5.2 | 64 | 56.2 57.1 | 41.5 33.4 | 73.9 64.6 | 63.0 59.0 | 48.4 48.0 | 34.9 33.3 | 38.2 36.7 | 50.9 49.2 |

| Gemini 3 Flash | 1 FPS | 65.7 45.6 | 53.1 36.3 | 77.6 74.9 | 52.8 47.4 | 64.6 54.3 | 47.9 52.4 | 41.8 50.0 | 57.6 55.9 |

| Gemini 3 Pro | 1 FPS | 60.1 45.3 | 54.7 38.3 | 79.3 73.0 | 51.9 47.4 | 68.1 70.0 | 56.0 60.8 | 56.4 65.3 | 60.9 60.5 |

| Open-Source Models | |||||||||

| Qwen3-VL-8B-Instruct | 64 | 40.4 70.0 | 52.3 50.5 | 69.0 74.7 | 45.1 63.3 | 57.1 57.3 | 39.5 52.3 | 40.5 33.5 | 49.1 57.4 |

| Qwen3-VL-32B-Instruct | 64 | 46.9 74.0 | 65.0 57.0 | 70.4 76.6 | 55.8 70.8 | 53.8 55.6 | 34.0 59.1 | 47.3 39.7 | 53.3 61.8 |

| InternVL3.5-8B | 64 | 43.3 72.7 | 54.6 40.3 | 64.2 68.4 | 47.6 65.3 | 45.0 57.0 | 36.3 48.6 | 44.4 35.6 | 47.9 55.4 |

| InternVL3.5-38B | 64 | 43.8 73.9 | 60.6 39.2 | 70.2 73.0 | 58.4 65.0 | 57.4 66.2 | 45.9 72.0 | 42.7 36.1 | 54.1 60.8 |

| LLaVA-Video-7B-Qwen2 | 64 | 31.3 50.6 | 1.4 13.3 | 52.5 44.7 | 16.7 23.8 | 38.3 43.7 | 33.3 42.7 | 38.4 35.6 | 30.3 36.3 |

| LLaVA-Video-72B-Qwen2 | 64 | 40.1 51.9 | 29.6 24.0 | 59.3 57.4 | 27.9 32.7 | 39.6 42.4 | 24.8 37.4 | 43.0 32.0 | 37.8 39.7 |

Performance of specialized 3D VLMs and their base models on ReVSI with corresponding VSI-Bench scores shown in parentheses. ReVSI evaluates each model under its native inference frame setting using frame-adaptive ground-truth answers. Scores lower than the base model are highlighted in red.

| Method | Frames | Numerical Question | Multiple-Choice Question | Avg. | |||||

|---|---|---|---|---|---|---|---|---|---|

| Obj. Cnt. | Abs. Dist. | Obj. Size | Room Size | Rel. Dist. | Rel. Dir. | Route Plan | |||

| Qwen2.5-7B-Instruct+SigLIP2 | -- | -- | -- | -- | -- | -- | -- | -- | -- |

| Cambrian-S-7B | 128 | 48.4 73.2 | 60.5 50.5 | 65.5 74.9 | 46.7 72.2 | 37.1 71.1 | 48.5 76.2 | 37.0 41.8 | 49.1 67.5 |

| Qwen2.5-VL-7B-Instruct | 4 FPS | 36.9 36.8 | 15.0 17.6 | 49.7 51.0 | 29.0 29.2 | 31.5 35.4 | 29.5 38.4 | 36.7 33.5 | 32.6 32.6 |

| VST-7B-SFT | 4 FPS | 35.4 72.0 | 52.6 44.4 | 67.9 74.3 | 47.2 68.3 | 49.2 59.7 | 36.9 55.8 | 35.4 44.9 | 46.4 65.2 |

| Qwen2.5-VL-7B-Instruct | 32 | 34.3 43.7 | 21.7 22.3 | 45.5 49.2 | 35.1 37.5 | 32.6 40.1 | 33.7 38.9 | 34.1 32.0 | 33.9 37.7 |

| SpaceR-7B (SG-RLVR) | 32 | 30.7 61.9 | 34.5 28.6 | 52.0 60.9 | 18.6 35.2 | 22.8 38.2 | 34.5 46.0 | 20.2 31.4 | 30.5 43.5 |

| Qwen2.5-VL-3B-Instruct | 16 | 18.7 24.3 | 15.6 24.7 | 16.8 31.7 | -- 22.6 | 33.2 38.3 | 34.3 41.6 | -- 26.3 | 23.7 30.6 |

| Spatial-MLLM-4B-135k | 16 | 40.7 65.8 | 45.3 40.7 | 46.8 58.3 | -- 55.6 | 32.3 43.2 | 37.4 55.5 | -- 36.1 | 40.5 50.7 |

| Spatial-MLLM-4B-820k | 16 | 41.5 66.7 | 40.0 37.9 | 53.1 69.7 | -- 55.7 | 30.7 52.0 | 39.2 54.9 | -- 39.7 | 40.9 53.8 |

| LLaVA-Video-7B-Qwen2 | 32 | 29.9 48.5 | 1.5 14.0 | 53.0 47.8 | 19.3 24.2 | 39.1 43.5 | 33.8 42.4 | 38.8 34.0 | 30.8 36.3 |

| VLM3R-7B | 32 | 41.6 70.2 | 61.6 49.4 | 64.8 69.2 | 52.5 67.1 | 46.5 65.4 | 49.5 80.5 | 34.1 45.4 | 50.1 60.9 |

Object counting results on dummy videos where the ground-truth answer is always 0. Query-Drop removes frames containing the queried object while preserving the surrounding scene and other objects. First-Frame repeats the first frame of the Query-Drop video across all frames. Black uses pure black frames for the whole video. Exact Match accuracy is reported over 997 questions, with 0 as the only correct answer. Fine-tuned models tend to lean on strong priors learned from training data when answering, often overlooking the actual visual input.

Three dummy-video constructions used to probe whether models rely on visual evidence when answering object-counting questions.

| Method | Accuracy (Exact Match) | ||

|---|---|---|---|

| Query-Drop | First-Frame | Black | |

| Zero-shot | |||

| Human | 100.0 | 100.0 | 100.0 |

| GPT-5.2 | 74.0 | 89.6 | 99.2 |

| Gemini 3 Pro | 62.3 | 85.0 | 94.0 |

| Qwen2.5-VL-7B-Instruct | 55.8 | 79.9 | 99.8 |

| Qwen3-VL-8B-Instruct | 34.7 | 80.9 | 99.8 |

| Qwen3-VL-32B-Instruct | 50.5 | 92.7 | 100.0 |

| InternVL3.5-8B | 14.7 | 52.5 | 17.7 |

| InternVL3.5-38B | 9.1 | 45.0 | 1.2 |

| LLaVA-Video-7B-Qwen2 | 47.7 | 49.9 | 0.0 |

| LLaVA-Video-72B-Qwen2 | 45.0 | 65.6 | 15.2 |

| Fine-tuned | |||

| Cambrian-S-7B | 1.1 | 2.8 | 0.0 |

| VST-7B-SFT | 1.1 | 8.7 | 0.4 |

| SpaceR-7B (SG-RLVR) | 8.1 | 24.3 | 14.6 |

| Spatial-MLLM-4B-135k | 0.2 | 0.9 | 0.0 |

| Spatial-MLLM-4B-820k | 0.0 | 0.0 | 0.0 |

| VLM3R-7B | 4.1 | 2.5 | 0.2 |

ReVSI supports the following inference and evaluation frameworks:

If you find this work useful, please consider citing our paper:

@article{zhang2026revsi,

title={ReVSI: Rebuilding Visual Spatial Intelligence Evaluation for Accurate Assessment of VLM 3D Reasoning},

author={Zhang, Yiming and Chen, Jiacheng and Tan, Jiaqi and Mao, Yongsen and Chen, Wenhu and Chang, Angel X.},

journal={arXiv preprint arXiv:2604.24300},

year={2026}

}

This work was funded in part by a CIFAR AI Chair and NSERC Discovery Grants, and enabled by support from Digital Research Alliance of Canada and a CFI/BCKDF JELF grant. We thank Jiayi Liu for help with benchmark data verification and discussion.