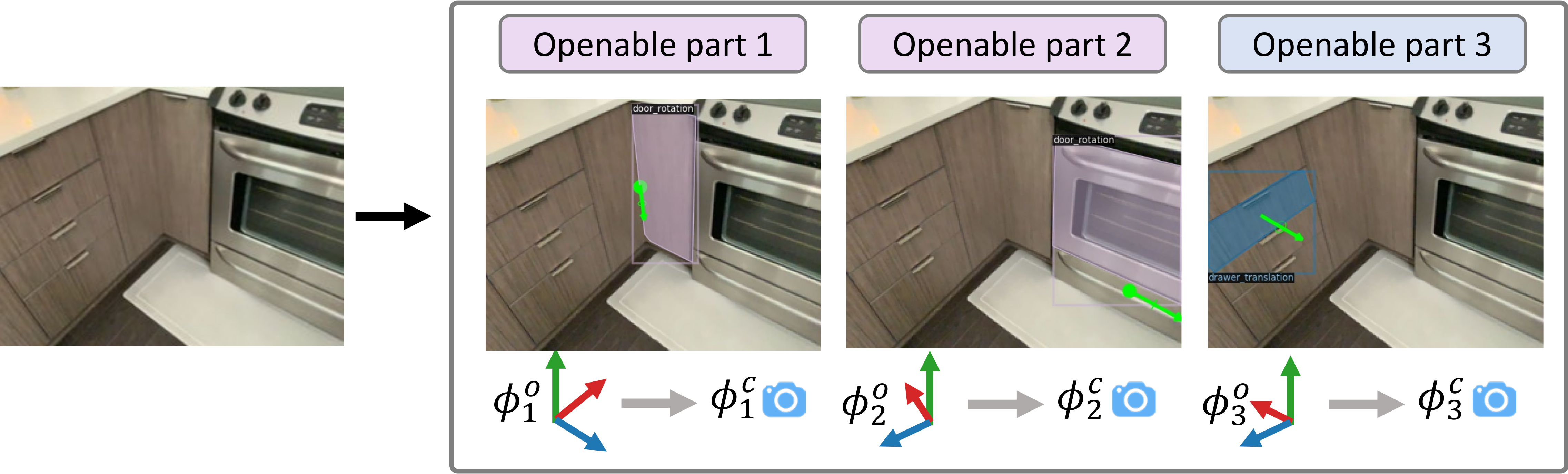

We tackle the openable-part-detection (OPD) problem where we identify in a single-view image parts that are openable and their motion parameters. Our OPDFORMER architecture outputs segmentations for openable parts on potentially multiple objects, along with each part’s motion parameters: motion type (translation or rotation, indicated by blue or purple mask), motion axis and origin (see green arrows and points). For each openable part, we predict the motion parameters (axis and origin) in object coordinates along with an object pose prediction to convert to camera coordinates.

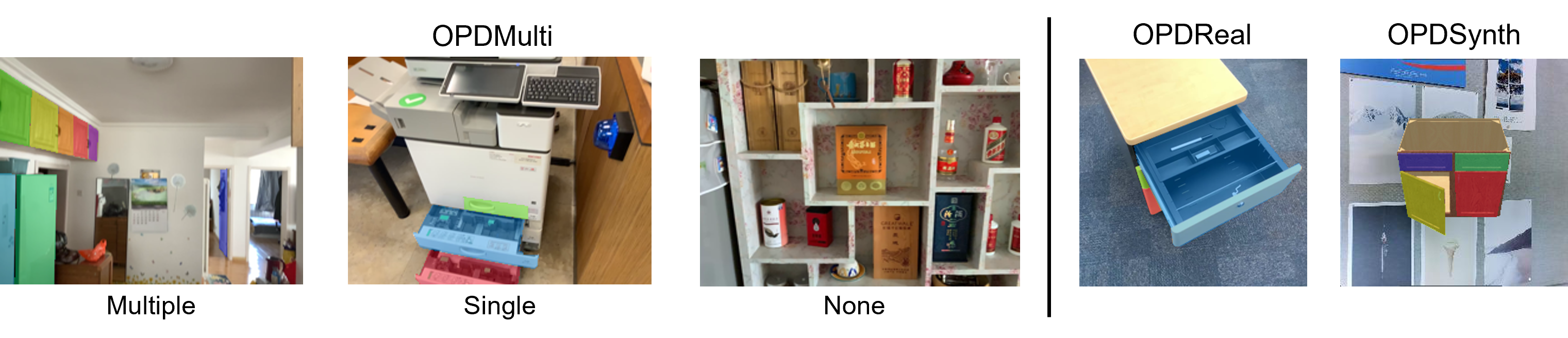

OPDMulti Task and Dataset

Comparison of images from OPDMulti (left) to images from OPDReal and OPDSynth. Our OPDMulti dataset is more realistic and diverse with images from varied viewpoints and with multiple/single/no openable objects.

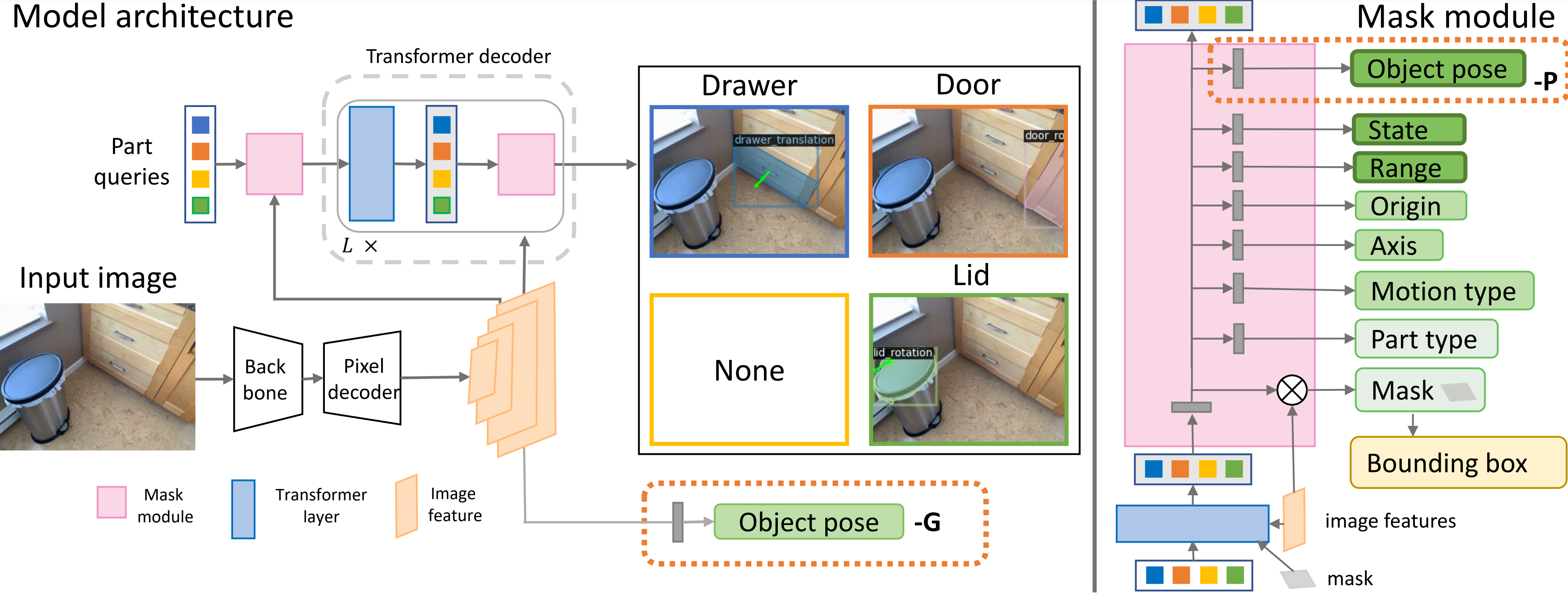

OPDFormer Structure

Illustration of OPDFormer variants, which are based on a Mask2Former backbone. The left side shows the overall network structure, while the right shows the mask module in detail. The mask module predicts part motion parameters (motion origin MO, motion axis MA, motion type MT, motion state, motion range and part class in green boxes). The detected parts indicated in the center correspond to the queries indicated by colored boxes on the right side.

Motion State Animation

| RGB |

|

|

|

|

|---|---|---|---|---|

| Animation |

|

|

|

|

Download

To download the OPDMulti dataset (7.1G), please click on the following link download link . Please see the OPDMulti code repo for more details on the dataset.

We provide the pretrained model weights for our model variants on RGB, depth, and RGB-D on both the OPDMulti and OPDReal datasets. For OPDMulti, we initialize our network the the weights trained on OPDReal and then train on OPDMulti.| Model Name | Input | PDet | +M | +MA | +MAO | OPDMulti Model | OPDReal Model |

|---|---|---|---|---|---|---|---|

| OPDFormer-C | RGB | 29.1 | 28.0 | 13.5 | 12.3 | model(169M) | model(169M) |

| OPDFormer-O | RGB | 27.8 | 26.3 | 5.0 | 1.5 | model(175M) | model(175M) |

| OPDFormer-P | RGB | 31.4 | 30.4 | 18.9 | 15.1 | model(169M) | model(169M) |

| OPDFormer-C | depth | 20.9 | 18.9 | 11.4 | 10.1 | model(169M) | model(169M) |

| OPDFormer-O | depth | 23.4 | 21.5 | 5.9 | 1.9 | model(175M) | model(175M) |

| OPDFormer-P | depth | 21.7 | 19.8 | 15.4 | 13.5 | model(169M) | model(169M) |

| OPDFormer-C | RGBD | 24.2 | 22.7 | 14.1 | 13.4 | model(169M) | model(169M) |

| OPDFormer-O | RGBD | 23.1 | 21.2 | 6.7 | 2.6 | model(175M) | model(175M) |

| OPDFormer-P | RGBD | 27.4 | 25.5 | 18.1 | 16.7 | model(169M) | model(169M) |