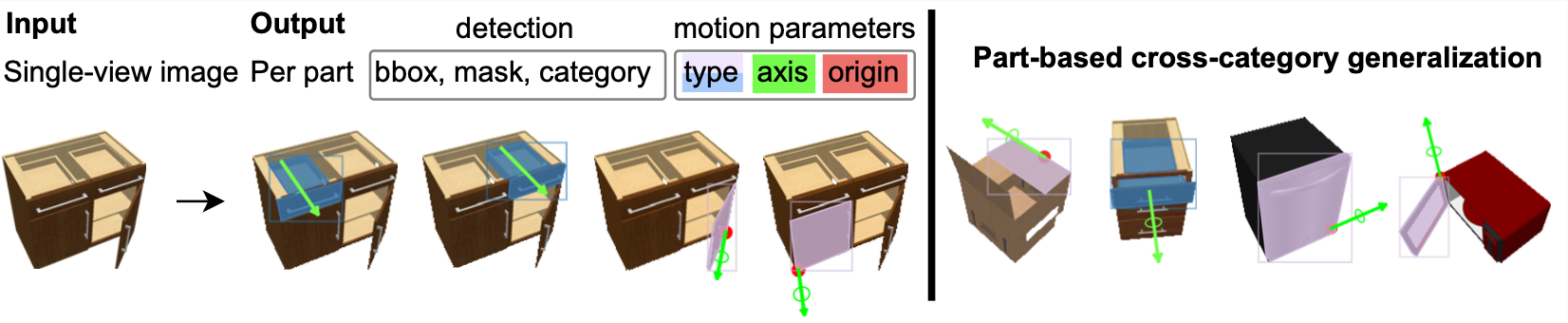

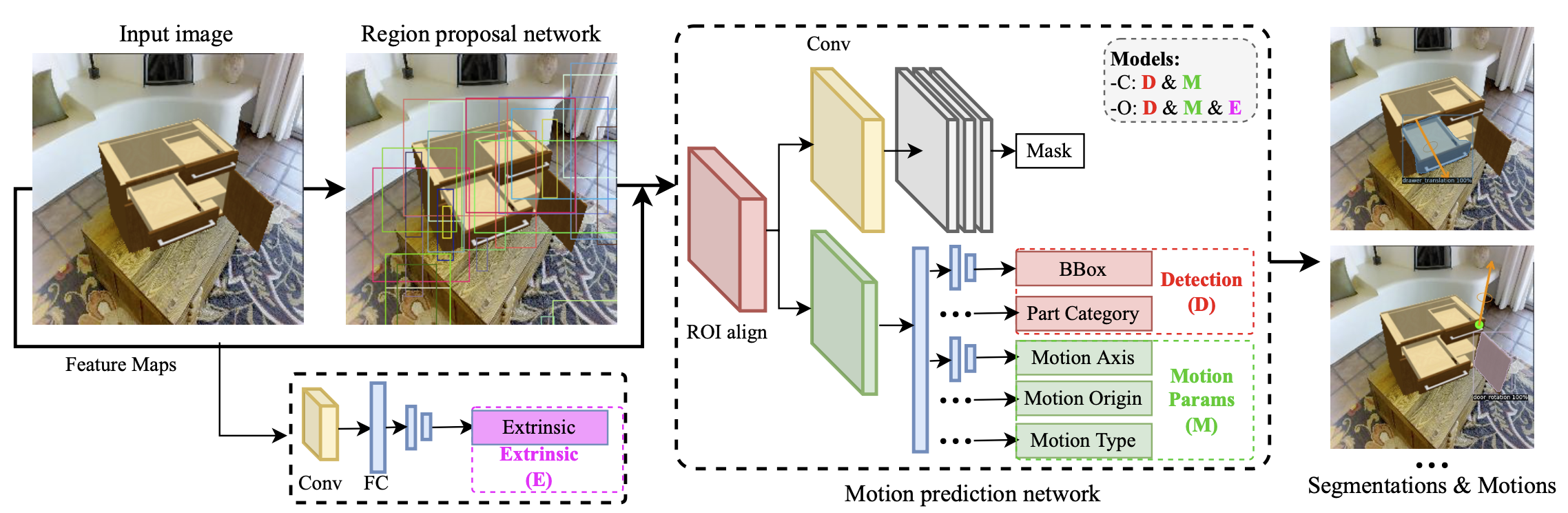

We address the task of predicting what parts of an object can open and how they move when they do so. The input is a single image of an object, and as output we detect what parts of the object can open, and the motion parameters describ- ing the articulation of each openable part. To tackle this task, we create two datasets of 3D objects: OPDSynth based on existing synthetic objects, and OPDReal based on RGBD reconstructions of real objects. We then design OPDRCNN, a neural architecture that detects openable parts and predicts their motion parameters. Our experiments show that this is a challenging task especially when considering general- ization across object categories, and the limited amount of information in a single image. Our architecture outperforms baselines and prior work especially for RGB image inputs.

Video

Overview

Example articulated objects from the OPDSynth dataset and the OPDReal dataset. The first row is from OPDSynth. Left: different openable part categories (lid, in orange, drawer in green, door in red). Right: Cabinet objects with different kinematic structures and varying numbers of openable parts. The second row is from our OPDReal dataset. Left: reconstructed cabinet and its semantic part segmentation. Right: example reconstructed objects from different categories.

Illustration of the network structure for our OPDRCNN-C and OPDRCNN-O architectures. We leverage a MaskRCNN backbone to detect openable parts. Additional heads are trained to predict motion parameters for each part.

Download

Below are datasets and pre-trained models for our three repos to download

OPD Project : OPD Dataset OPD Pre-trained ModelsOPDPN Baseline Project : OPDPN Dataset OPDPN Pre-trained Models

ANCSH Baseline Project : ANCSH Dataset ANCSH Pre-trained Models